Space-Based Surveillance for Drone Threats [Concept]

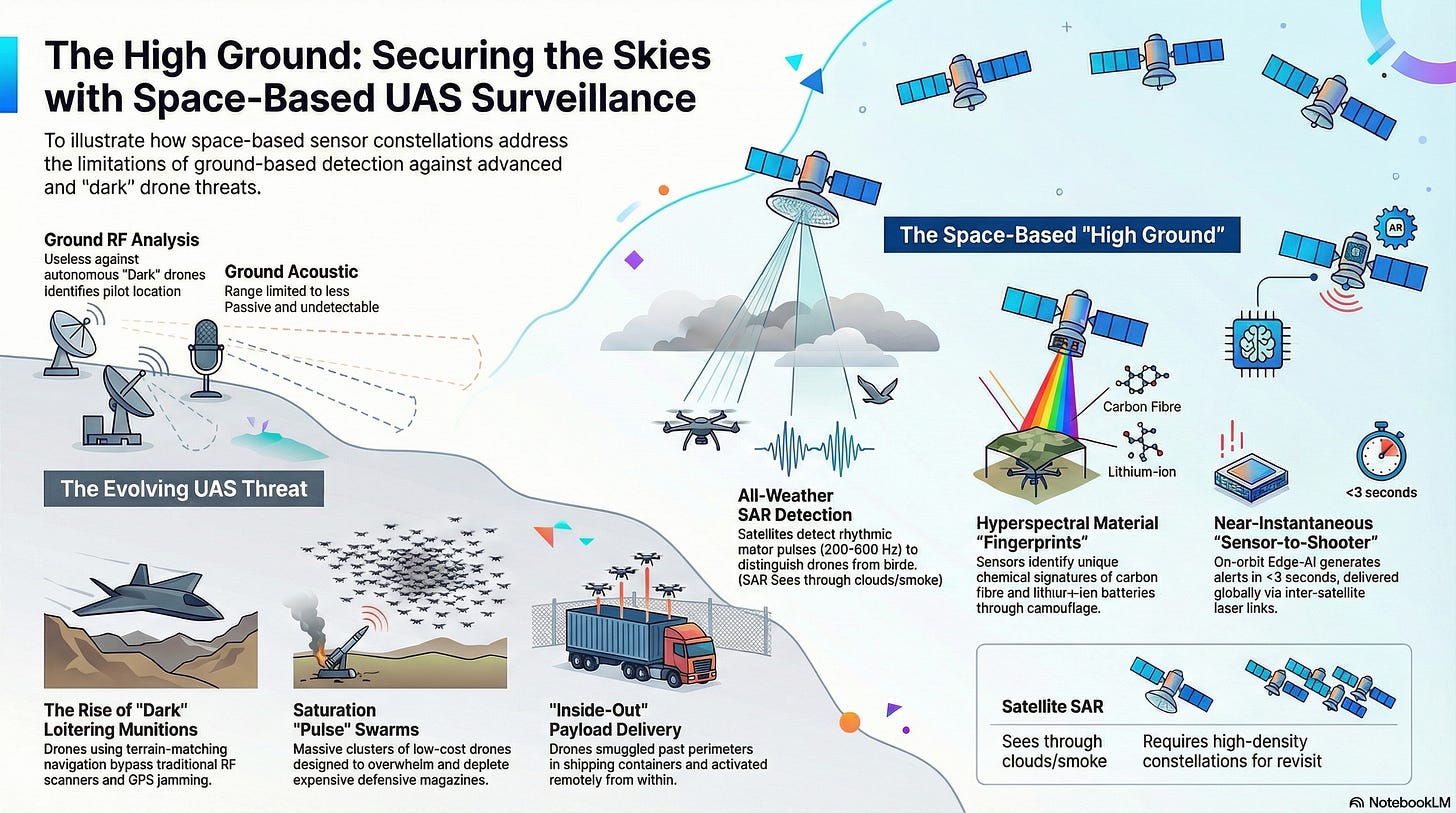

By leveraging advancements in Edge-AI, LEO mesh constellations and HAPSs, organizations may soon be able to detect “dark” threats that traditional radar and RF scanners miss.

The proliferation of unmanned aerial systems (UAS) has fundamentally altered the security landscape for critical national infrastructure, maritime trade, and border integrity.

This article provides a strategic analysis of a possible evolution from reactive, ground-based sensor networks to an integrated, space-based “High Ground” surveillance model.

By leveraging advancements in Edge-AI, LEO (Low Earth Orbit) mesh constellations and High-Altitude Pseudo-Satellites (HAPS), organizations may soon be able to detect “dark” threats that traditional radar and radio-frequency (RF) scanners may miss.

I. Example Drone Threats

The strongest use cases for these systems are where the cost of failure could be catastrophic.

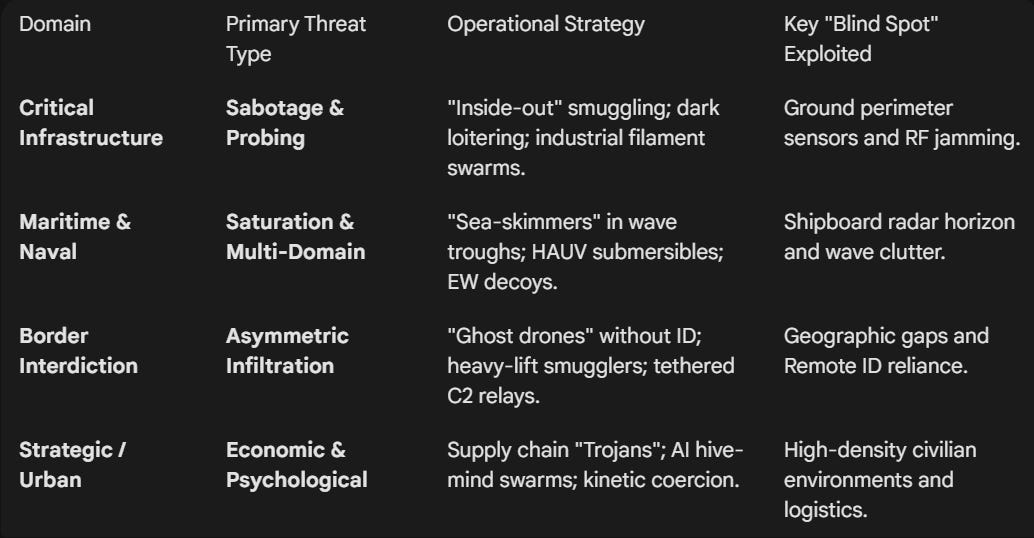

1. Critical Infrastructure

For energy grids, nuclear facilities, and water plants, the threat is defined by disruption and sabotage.

“Dark” Loitering Munitions:

Pre-programmed drones that navigate via visual terrain matching or Inertial Navigation (INS), making them immune to traditional GPS jamming or RF signal detection.

“Inside-Out” Payload Delivery:

Use of “Spider Web” tactics where drones are smuggled into sensitive perimeters inside legitimate shipping containers or delivery vehicles, only to be activated remotely once past the main gates.

Persistent Probing & Surveillance:

High-endurance drones that loiter at extreme altitudes to map facility weaknesses, security patrol patterns, and emergency response protocols without triggering low-level ground sensors.

Industrial Sabotage Swarms:

Small, inexpensive drones carrying specialized payloads (e.g., carbon-fiber filaments) designed to short-circuit power lines or clog cooling intakes at nuclear facilities.

2. Maritime & Naval

The maritime domain faces multi-domain saturation where the goal is to overwhelm shipboard defenses.

Low-Altitude “Sea Skimmers”:

Unmanned Surface Vessels (USVs) or low-flying UAVs that move in the “radar trough” of wave crests, effectively staying invisible to shipboard radar until the final seconds of engagement.

Hybrid Aerial-Underwater Vehicles (HAUVs):

Drones capable of flying into a theater and then submerging to act as a torpedo or stationary mine, complicating the target classification for sonar and radar teams.

Saturation “Pulse” Swarms:

Massive clusters of 50+ drones launched simultaneously to force a ship to deplete its limited magazine of expensive interceptor missiles on $500 targets.

Electronic Warfare (EW) Decoys:

Drones that emit high-powered radio signatures to mimic larger aircraft, forcing naval commanders to misallocate defensive resources while a “silent” lethal drone approaches from a different vector.

3. Border Interdiction Threats

At the border, the primary challenge is asymmetric smuggling and infiltration.

“Ghost Drones”:

Unmanned systems with no Remote ID, spoofed electronic signatures, or specialized radar-absorbent paint designed to slip through “blind spots” in ground-based picket lines.

Heavy-Lift Smuggling Platforms:

Large, modified agricultural drones capable of transporting 50kg+ of contraband (weapons, fentanyl) or sensitive equipment across unmonitored desert or mountain corridors.

Autonomous Reconnaissance Pickets:

Cartel-operated drones that monitor border patrol movements in real-time, using AI to identify gaps in sensor coverage and coordinate “diversionary” crossings.

Tethered Communication Relay Drones:

High-altitude tethered drones used by hostile actors to extend their own command-and-control (C2) reach deep into a neighboring country’s territory, allowing for remote operation of assets far from the physical border.

4. Emerging Strategic Threats

AI-Enabled Swarm Autonomy:

The transition from “single-pilot, single-drone” to a “hive mind” where drones communicate with each other to dodge counter-UAS measures collectively.

Supply Chain “Trojans”:

Drones built with malicious firmware “backdoors” that can be triggered by a specific satellite signal or a predetermined date to activate within a protected airspace.

Kinetic Coercion:

Using drones as “symbolic” weapons to disrupt major public events or airports, creating high-cost economic downtime and public panic with minimal investment.

II. Drone Detection Methods

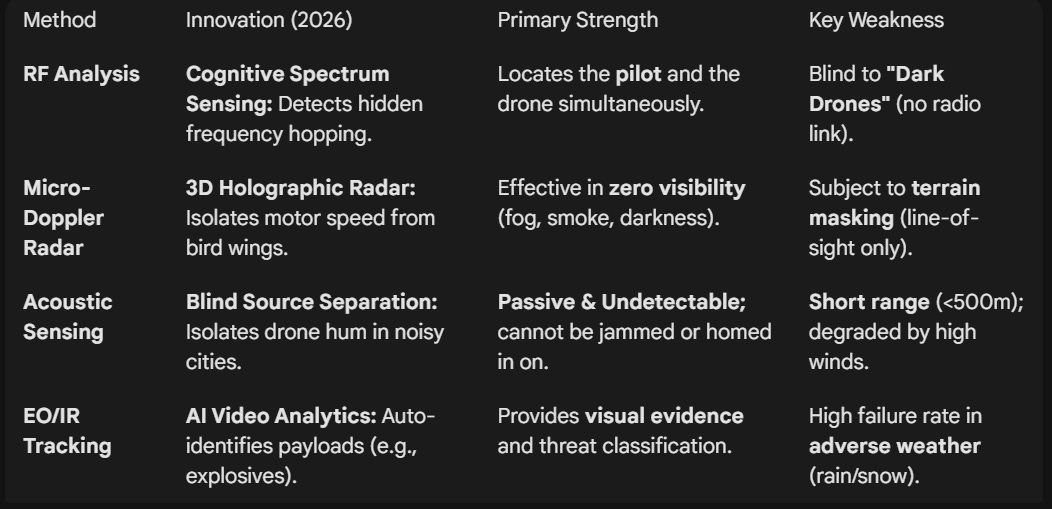

1. Radio Frequency (RF) Analysis

RF analysis remains the most cost-effective method for detecting commercial and non-military drones.

The Method:

Systems like RF Scanners monitor the 2.4GHz, 5.8GHz, and 4G/5G/LTE bands for the specific communication “handshake” between a drone and its pilot.

Innovation: Cognitive Spectrum Sensing.

Modern sensors can now detect “Frequency Hopping” and proprietary encrypted protocols that used to be invisible to standard scanners.

Strength:

Could identify the location of the pilot, not just the drone.

Weakness:

Useless against “Dark Drones” (fully autonomous drones flying without a radio link).

2. Micro-Doppler Radar (“Propeller Signature”)

Traditional air-defense radars often filter out small, slow objects to avoid “bird clutter.”

The Method:

Micro-Doppler Radar (e.g., Robin Radar IRIS) specifically looks for the high-speed rotational movement of drone propellers.

Even if the drone is hovering or stationary, the blades create a unique “flick” in the radar return.

Innovation: 3D Holographic Radar.

These systems create a 3D “bubble” of coverage that can distinguish between a drone and a bird by analyzing the flight pattern and the speed of the motor.

Strength:

Works in total darkness, fog, and through smoke.

Weakness:

Limited by “Line of Sight”; buildings or mountains create radar shadows.

3. Acoustic Array Sensing (“Directional Ear”)

Acoustic sensors use arrays of high-fidelity microphones to “listen” for the specific ultrasonic and audible hum of drone motors.

The Method:

Machine Learning algorithms compare the sound profile against a library of thousands of drone motor signatures.

Innovation: Blind Source Separation (BSS).

This allows the system to isolate a single drone’s sound even in a noisy environment like a city center or a busy shipyard.

Strength: Passive and Undetectable.

Since the sensor doesn’t emit any signals, an enemy drone cannot “jam” it or know they are being tracked.

Weakness:

Range is typically limited to <500m and can be affected by high winds.

4. Electro-Optical & Infrared (EO/IR) Tracking

Once a drone is detected by radar or RF, EO/IR cameras provide the “Visual Confirmation.”

The Method:

High-zoom PTZ (Pan-Tilt-Zoom) cameras lock onto the target.

Thermal imaging is used to spot the heat signature of the drone’s battery and motors against the cold sky.

Innovation: AI-Informed Video Analytics.

The system automatically “boxes” the drone and follows it, identifying its payload (e.g., “Camera only” vs. “Explosive device”) without human intervention.

Strength:

Provides forensic evidence for prosecution and confirms the threat level.

Weakness:

Limited by weather (heavy rain/snow) and cannot “search” the whole sky effectively on its own.

III. Satellite-based Monitoring Modalities

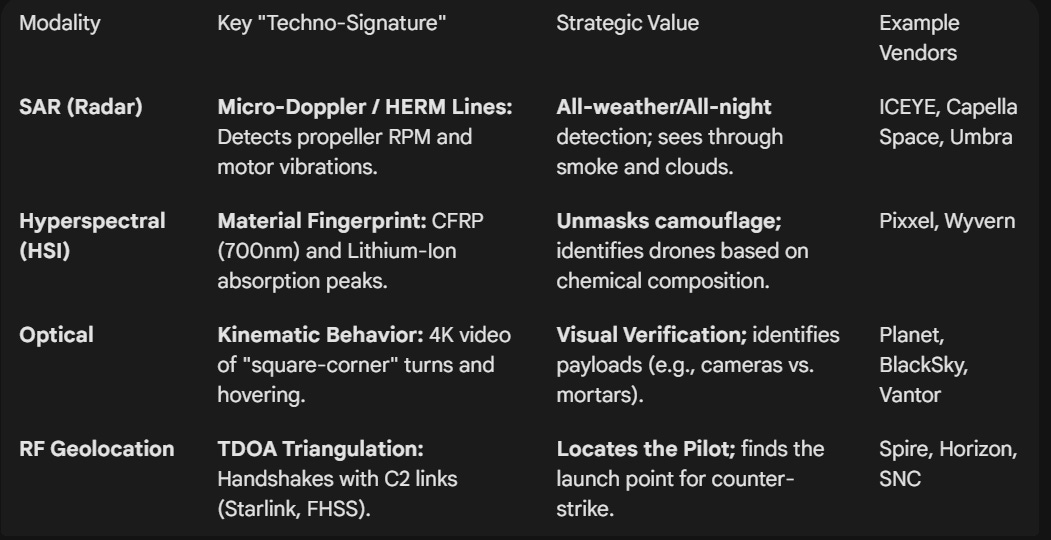

1. Synthetic Aperture Radar (SAR): The “All-Weather Eye”

Technical Logic:

The satellite emits microwave pulses that bounce off the Earth.

Because metal and carbon fiber (typical drone materials) reflect microwaves differently than water or soil, drones appear as high-contrast “bright spots.”

Operational Value:

SAR can see through heavy cloud cover, smoke, and total darkness.

It is a primary way to detect a drone launch during a storm or at midnight in a remote desert.

Capability:

Interferometric SAR (InSAR) can potentially detect the minute “vibration patterns” of a drone’s motors, allowing the system to distinguish a group of drones from a flock of birds by their rhythmic signature.

The Signature:

Even if a drone is hovering (zero radial velocity), its propeller blades create a secondary Doppler shift.

The Marker:

Satellites detect HERM (Helicopter Rotor Modulation) lines. AI could be used to count the blades and calculate the RPM from orbit, distinguishing a quadcopter from a hexacopter.

Bird vs. Drone:

A bird’s wing-beat is a low-frequency, non-linear signature (<20 Hz).

A drone’s motor is a high-frequency, constant rhythmic pulse (200–600 Hz), making it an unambiguous “bright spot” for radar.

Example Vendors:

ICEYE: Pioneers in high-revisit microsatellite SAR constellations.

Capella Space: Specializes in high-resolution SAR for sub-daily monitoring.

Umbra: Provides high-resolution SAR data with “open” pricing models for rapid acquisition.

2. Hyperspectral Imaging (HSI): The “Material Fingerprint”

While the human eye sees three colors (Red, Green, Blue), HSI sensors see hundreds of narrow spectral bands.

Technical Logic:

Every material has a unique “Spectral Fingerprint.” Carbon fiber, lithium-ion batteries, and fiberglass leave distinct chemical traces in the light spectrum.

Operational Value:

HSI allows satellites to “unmask” drones that are painted in camouflage or hidden under netting.

If the spectral signature doesn’t match the surrounding vegetation, the satellite flags it as a Target of Interest (TOI).

Capability:

Satellites could use Real-Time Spectral Registration to identify specific drone models based on their unique chemical coating or the heat-signature of their electronics.

The Signature:

Satellites scan for the unique reflectance curves of Carbon Fiber (CFRP) and Lithium-Ion.

The Marker:

CFRP has a sharp absorption peak at 700 nm, while natural vegetation reflects heavily in the “Green Bump” (550 nm) and “Red Edge” (700-750 nm).

Example Vendors:

Pixxel: The global leader in high-resolution hyperspectral constellations for environmental and security monitoring.

Wyvern: Specializes in high-spatial-resolution hyperspectral data for detailed material analysis.

3. Optical Pan-Sharpening: The “High-Res Verification”

Optical monitoring is moving from “taking a picture” to “continuous stream analysis.”

Technical Logic:

By merging a high-resolution black-and-white (panchromatic) image with lower-resolution color (multispectral) data, satellites achieve sub-30cm resolution.

Operational Value:

This can be used for “Visual Identification” (VID).

Once SAR or HSI detects a blip, the optical sensor zooms in to confirm if the drone is carrying a payload, such as a camera or an explosive canister.

Capability: Video-from-Space.

High-cadence LEO constellations could provide 10-15 seconds of 4K video of a moving drone, allowing ground teams to calculate its exact flight path and intended destination.

The Signature:

Unlike birds, drones exhibit “Square-Cornering” (instant 90-degree turns) and precise hovering that defy biological physics.

The Marker:

Sub-30cm Pan-Sharpened imagery allows the system to identify the payload (e.g., distinguishing an Amazon delivery box from a 40mm mortar round).

Example Vendors:

Vantor: The industry gold standard for 30cm high-resolution imagery and intelligence.

Planet: Utilizes a massive “flock” of satellites to provide daily global imagery and taskable 50cm video via its Pelican constellation.

BlackSky: Specializes in ultra-low latency, real-time event monitoring with frequent revisit rates.

4. RF Geolocation: The “Signal Sniffer”

If a drone is communicating with a pilot or a satellite, it is “loud” in the radio spectrum.

Technical Logic:

Using a technique called TDOA (Time Difference of Arrival), three or more satellites listen for the drone’s radio emissions (2.4GHz, 5.8GHz, or SATCOM).

By measuring the nanosecond difference in when the signal hits each satellite, they triangulate the drone’s position.

Operational Value:

This could be an effective way to find the Launch Point and the Pilot’s Location, enabling a counter-strike against the source rather than just the drone.

The Signature:

Frequency Hopping Spread Spectrum (FHSS) signals in the 2.4GHz or 5.8GHz bands.

The Marker:

Even if a drone is “Dark” (silent), it often emits a periodic “Heartbeat” signal to its satellite C2 link (Starlink/Iridium).

TDOA Triangulation:

By measuring the nanosecond difference in signal arrival across three satellites, the system could locate the Pilot’s Ground Station within 10 meters.

Example Vendors:

Spire Global: Operates one of the world’s largest RF-sensing constellations for global signal intelligence (SIGINT).

Horizon Technologies: Focuses on maritime and border-based RF monitoring through its Amber constellation.

Sierra Nevada Corporation (SNC): Recently launched the Vindlér constellation specifically for geolocating emitters and GPS jammers.

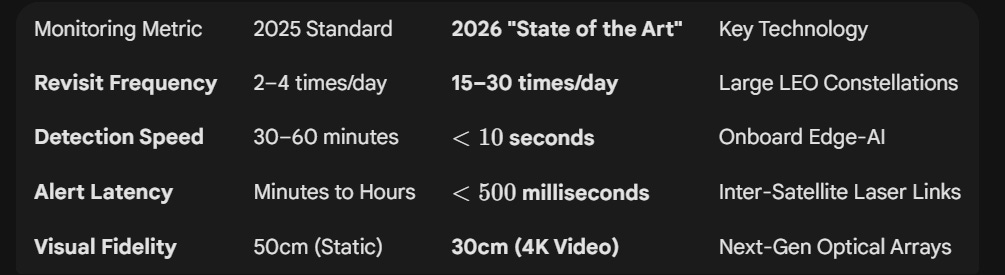

IV. Real Time Satellite Monitoring Limitations

1. Revisit Rates (Achieving “The Unblinking Eye”)

A single satellite is useless for drone defense; it is the density of the shell that matters.

High-Cadence Tasking:

Leading constellations like BlackSky and Planet (Pelican) now offer up to 15–30 revisits per day over high-priority “Locations of Interest” (LOIs).

This means a new high-resolution scan could be generated roughly every 45 to 90 minutes.

The “Dawn-to-Dusk” Coverage:

Unlike older systems that only imaged at 10:00 AM local time, modern constellations use “inclined orbits” to provide staggered coverage from sunrise to sunset.

Persistent “Shadowing”:

For active conflict zones, “Tasking Priority” could allow a customer to lock multiple satellites into a sequence, creating a 10-minute window of continuous 4K video-from-space to track a moving drone swarm across a city.

2. Latency (The “Sensor-to-Shooter” Timeline)

Detection is only as good as the reaction it triggers.

A. On-Orbit Edge Processing (<3 Seconds)

Modern satellites like Planet Pelican (equipped with NVIDIA Jetson AI platforms) no longer send raw images to Earth.

The Workflow:

The satellite’s onboard AI could scan the raw sensor feed, identifies the “Drone Techno-Signature” (SAR vibration or HSI material anomaly), and generate a text-based alert (coordinates, velocity, type).

Speed:

This processing happens in near-real-time on the satellite itself.

B. Inter-Satellite Laser Links (<200 Milliseconds)

Once the alert is generated, it doesn’t wait for the satellite to fly over a base.

The Workflow:

Using Optical Inter-Satellite Links (OISL), the alert is “bounced” via laser from one satellite to another across the LEO mesh (e.g., SpaceX Starshield or Iridium).

Speed:

The alert travels at the speed of light through the vacuum of space, reaching the commander’s desk in under 0.2 seconds, regardless of where the drone was detected globally.

V. High-Altitude Pseudo-Satellites (HAPS)

While satellites provide global coverage, they are hampered by orbital mechanics (they must move to stay up). Near Space platforms, or High-Altitude Pseudo-Satellites (HAPS), solve this by “loitering” over a single coordinate for months, potentially providing the “Unblinking Eye” that tactical drone defense requires.

1. Why Near Space could be the Primary Detection Layer

Zero-Latency “Edge” Processing:

Because HAPS are physically 20-100 times closer to the ground than LEO satellites, signal latency is negligible (<1ms).

This allows AI-driven “Kill Chains” to activate the moment a drone is detected.

Continuous Staring Capability:

Unlike a satellite that passes over a target in minutes, a HAPS platform could maintain a fixed station for up to 200 days, ensuring there are zero “blind windows” for a drone to exploit.

Ultra-High Resolution:

At 20 km, a standard optical sensor can achieve 5-10 cm resolution.

This allows security teams to not only see the drone but identify its specific payload, serial numbers, or even the chemical signature of its battery.

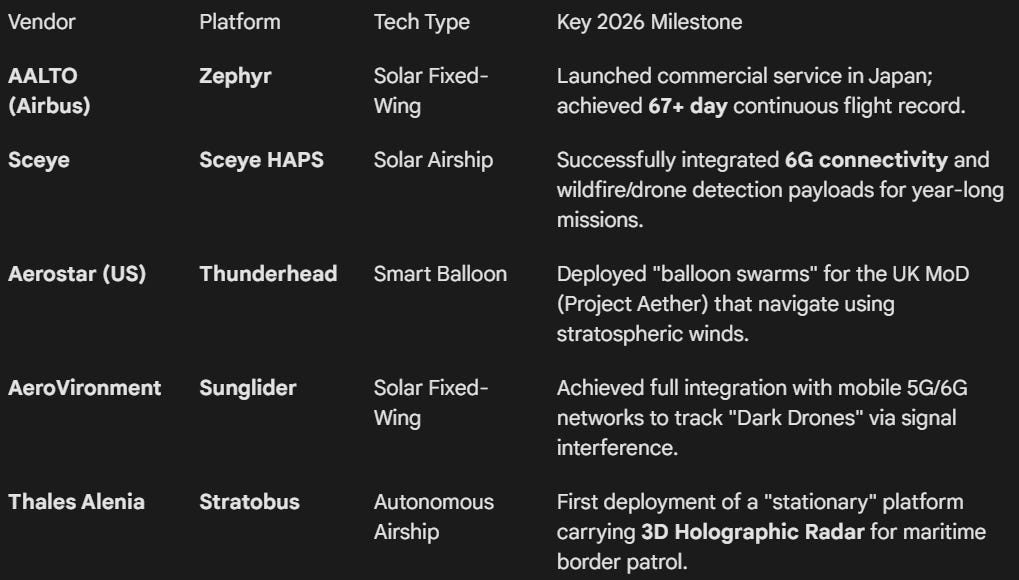

2. Example Vendors & Platforms

The currnet Near Space market is divided into Fixed-Wing (Solar) and Lighter-than-Air (Balloon/Airship) platforms.

3. Stratospheric Detection Modalities

Near Space platforms could use a specialized suite of sensors that perform differently than their orbital counterparts:

Strat-Observer:

A steerable high-res camera that could reach any point in a 40 x 30 km area instantly.

It could provide live 4K video-from-the-stratosphere to track moving swarms.

Acoustic Pickets:

Experimental HAPS could carry downward-facing microphone arrays.

At 60,000 ft, the air is thin but quiet; AI could filter atmospheric noise to “hear” the ultrasonic signature of a drone swarm from miles away.

Direct-to-Device (D2D) Monitoring:

Vendors like AALTO and Sceye could use their 5G/6G connectivity payloads as “passive sensors.”

If a drone flies through the signal beam between the HAPS and a ground user, the resulting signal attenuation (multipath interference) flags the drone’s position immediately.

4. The “HAPS-as-a-Service” Model

Organizations could subscribe to “Detection Bubbles.”

The Cost:

A single HAPS platform could cover up to 7,500 km^2 (roughly the size of a small country or a massive naval base).

The Deployment:

Platforms could be launched from “Aaltoports” (dedicated HAPS runways in Kenya, Japan, and the US) and steered remotely to the Location of Interest (LOI).